We’ve made every attempt to make this as straightforward as possible, but there’s a lot more ground to cover here than in the first part of the guide. If you find glaring errors or have suggestions to make the process easier, let us know on our discord.

With that out of the way, let’s talk about what you need to get 3D acceleration up and running:

If you’ve arrived here without context, check out part one of the guide here.

General

- A Desktop. The vast majority of laptops are completely incompatible with passthrough on Mac OS.

- A working install from part 1 of this guide, set up to use virt-manager

- A motherboard that supports IOMMU (most AMD chipsets since 990FX, most mainstream and HEDT chipsets on Intel since Sandy Bridge)

- A CPU that fully supports virtualization extensions (most modern CPUs barring the odd exception, like the i7-4770K and i5-4670K. Haswell refresh K chips e.g. ‘4X90K’ work fine.)

- 2 GPUs with different PCI device IDs. One of them can be an integrated GPU. Usually this means just 2 different models of GPU, but there are some exceptions, like the AMD HD 7970/R9 280X or the R9 290X and 390X. You can check here (or here for AMD) to confirm you have 2 different device IDs. You can work around this problem if you already have 2 of the same GPU, but it isn’t ideal. If you plan on passing multiple USB controllers or NVMe devices it may also be necessary to check those with a tool like

lspci. - The guest GPU also needs to support UEFI boot. Check here to see if your model does.

- Recent versions of Qemu (3.0-4.0) and Libvirt.

AMD CPUs

- The most recent mainline linux kernel (all platforms)

- Bios prior to AGESA 0.0.7.2 or a patched kernel with the workaround applied (Ryzen)

- Most recent available bios (ThreadRipper)

- GPU isolation fixes applied, e.g. CSM toggle and/or efifb:off (Ryzen)

- ACS patch (lower end chipsets or highly populated pcie slots)

- A second discrete GPU (most AMD CPUs do not ship with an igpu)

Intel CPUs

- ACS patch (only needed if you have many expansion cards installed in most cases. Mainstream and budget chipsets only, HEDT unaffected.)

- A second discrete GPU (HEDT and F-sku CPUs only)

Nvidia GPUs

- A 700 Series Card. High Sierra works up to 10 series cards, but Mojave ends support for 9, 10, 20 and all future Nvidia GPUs. Cards older than the 700 series may not have UEFI support, making them incompatible.

- A google search to make sure your card is compatible with Mac OS on Macs/hackintoshes without patching or flashing.

AMD GPUs

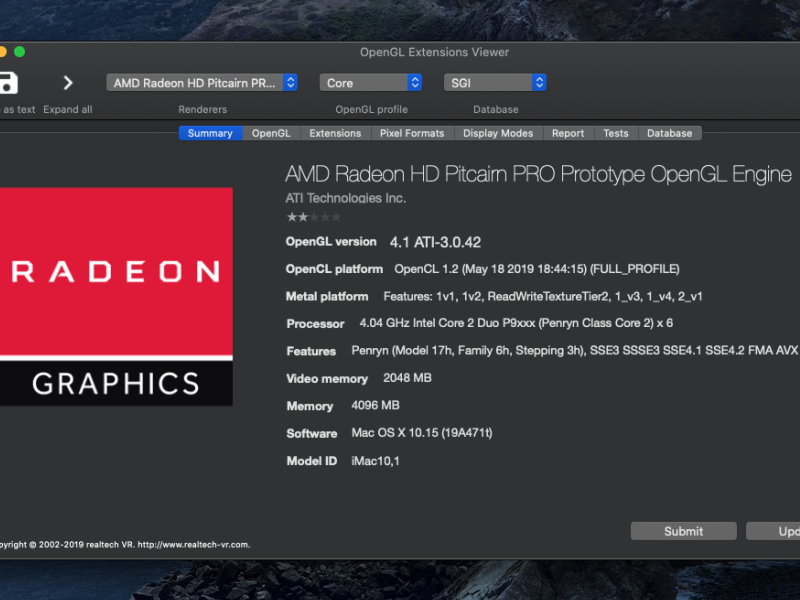

- A UEFI compatible Card. AMD’s refresh cycle makes this a bit more complicated to work out, but generally pitcairn chips and newer work fine — check your card’s bios for “UEFI Support” on techpowerup to confirm.

- A card without the Reset Bug (Anything older than Hawaii is bug free but it’s a total crap-shoot on any newer card. 300 series cards may also have Mac OS specific compatibility issues. Vega and Fiji seem especially susceptible)

- A google search to make sure your card is compatible with Mac OS on Macs/hackintoshes without patching or flashing.

Getting Started with VFIO-PCI

Provided you have hardware that supports this process, it should be relatively straightforward.

First, you want to enable virtualization extensions and IOMMU in your uefi. The exact name and locations varies by vendor and motherboard. These features are usually titled something like “virtualization support” “VT-x” or “SVM” — IOMMU is usually labelled “VT-d” or “AMD-Vi” if not just “IOMMU support.”

Once you’ve enabled these features, you need to tell Linux to use them, as well as what PCI devices to reserve for your vm. The best way of going about this is changing your kernel commandline boot options, which you do by editing your bootloader’s configuration files. We’ll be covering Grub 2 here because it’s the most common. Systemd-boot distributions like Pop!OS will have to do things differently.

run lspci -nnk | grep "VGA\|Audio" — this will output a list of installed devices relevant to your GPU. You can also just run lspci -nnk to get all attached devices, in case you want to pass through something else, like an NVMe drive or a usb controller. Look for the device ids for each device you intend to pass through, for example, my GTX 1070 is listed as [10de:1b81] and [10de:10f0] for the HDMI audio. You need to use every device ID associated with your device, and most GPUs have both an audio controller and VGA. Some cards, in particular VR-ready nvidia GPUs and the new 20 series GPUs will have more devices you’ll need to pass, so refer to the full output to make sure you got all of them.

If two devices you intend to pass through have the same ID, you will have to use a workaround to make them functional. Check the troubleshooting section for more information.

Once you have the IDs of all the devices you intend to pass through taken down, it’s time to edit your grub config:

$ sudo [your favorite editor e.g. nano, kate, vim] /etc/default/grub

It should look something like this:

GRUB_TIMEOUT=5 GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)" GRUB_DEFAULT=saved GRUB_DISABLE_SUBMENU=true GRUB_TERMINAL_OUTPUT="console" GRUB_CMDLINE_LINUX="nomodeset rdblacklist=nouveau rhgb quiet nouveau.modeset=0 rd.driver.blacklist=nouveau plymouth.ignore-udev" GRUB_DISABLE_RECOVERY="true"

In the line GRUB_CMDLINE_LINUX= add these arguments, separated by spaces: intel_iommu=on OR amd_iommu=on, iommu=pt and vfio-pci.ids= followed by the device IDs you want to use on the VM separated by commas. For example, if I wanted to pass through my GTX 1070, I’d add vfio-pci.ids=10de:1b81,10de:10f0. Save and exit your editor.

Run grub-mkconfig -o /boot/grub/grub.cfg. This path may be different for you on different distros, so make sure to check that this is the location of your grub.cfg prior to running this and change it as necessary. The tool to do this may also be different on certain distributions, e.g. update-grub.

Reboot.

Verifying Changes

Now that you have your devices isolated and the relevant features enabled, it’s time to check that your machine is registering them properly.

grab iommu.sh from our companion repo, make it executable with chmod +x iommu.sh and run it with ./iommu.sh to see your iommu groups. No output means you didn’t enable one of the relevant UEFI features, or didn’t revise your kernel commandline options correctly. If the GPU and your other devices you want to pass to the host are in their own groups, move on to the next section. If not, refer to the troubleshooting section.

Run dmesg | grep vfio to ensure that your devices are being isolated. No output means that the vfio-pci module isn’t loading and/or you did not enter the correct values in your kernel commandline options.

From here the process is straightforward. Start virt-manager (conversion from raw qemu covered in part one) and make sure the native resolution of both the config.plist and the OVMF options match each other and the display resolution you intend to use on the GPU. if you aren’t sure, just use 1080p.

Click Add Hardware in the VM details, select PCI host device, select a device you’ve isolated with vfio-pci, and hit OK. Repeat for each device you want to pass through. Remove all spice and Qxl devices (including spice:channel), attach a monitor to the gpu and boot into the VM. Install 3d drivers, and you’re ready to go. Note that you’ll need to add your mouse and keyboard to the VM as usb devices, pass through a usb controller, or set up evdev to get input in the host at this point as well.

If all goes well, you just need to install drivers and you’re ready to use 3d on your OSX VM.

Mac OS VM Networking

Fixing What Ain’t Broke:

NAT is fine for most people, but if you use SMB shares or need to access a NAS or other networked device, it can make that difficult. You can switch your network device to macvtap, but that isolates your VM from the host machine, which can also present problems.

If you want access to other networked devices on your guest machine without stopping guest-host communication, you’ll have to set up a bridged network for it. There are several ways to do this, but we’ll be covering the methods that use NetworkManager, since that’s the most common backend. If you use wicd or systemd-networkd, refer to documentation on those packages for bridge creation and configuration.

Via Network GUI:

This process can be done completely in the GUI on modern desktop environment by going to the network settings dialog, by adding a connection, selecting bridge as the type, adding your network interface as the slave device, and then activating the bridge (sometimes you need to restart network manager if the changes don’t take effect immediately.) From there all you need to do is add the bridge as a network device in virt-manager.

Via NMCLI:

Not everyone uses a full desktop environment, but you can do this with nmcli as well:

Run ifconfig or nmcli to get the names of your devices, they’ll be relevant in the next steps. take down or copy the name of the adapter you use to connect to the internet.

Next, run these commands, substituting the placeholders with the device name of the network adapter you want to bridge to the guest. They create a bridge device, connect the bridge to your network adapter, and create a tap for the guest system, respectively:

$ nmcli connection add type bridge ifname br0 con-name bridge1 $ nmcli connection add type bridge-slave ifname [NETWORKADAPTER] con-name bridge-slave master br0 $ nmcli connection add type tun ifname tap0 con-name tunnel1 mode tap owner 1000 $ nmcli connection mod tunnel1 connection.slave-type bridge connection.master br1

From here, remove the NAT device in virt-manager, and add a new network device with the connection set to br1: host device tap0. You may need to restart network manager for the device to activate properly.

NOTE: Wireless adapters may not work with this method. The ones that do need to support AP/Mesh Point mode and have multiple channels available. Check for these by running iw list. From there you can set up a virtual AP with hostapd and connect to that with the bridge. We won’t be covering the details of this process here because it’s very involved and requires a lot of prior knowledge about linux networking to set up correctly.

Other Options:

You can also pass through a PCIe NIC to the device if you happen to have a Mac OS compatible model laying around, and you’re comfortable with adding kexts to clover. This is also the best way to get AirDrop working if you need it.

If all else fails, you can manually specify routes between the host and guest using macvtap and ip, or set up a macvlan. Both are complex and require networking knowledge.

Input Tweaks

Emulated input might be laggy, or give you problems with certain input combinations. This can be fixed using several methods.

Attach HIDs as USB Host devices

This method is the easiest, but has a few drawbacks. Chiefly, you can’t switch your keyboard and mouse back to the host system if the VM crashes. It may also need to be adjusted if you change where your devices are plugged in on the host. Just click the add hardware button, select usb host device, and then select your keyboard and mouse. When you start your VM, the devices will be handed off.

Use Evdev

This method uses a technique that allows both good performance and switchable inputs. We have a guide on how to set it up here. Note that because OS X does not support PS2 Input out of the box, you need to replace your ps/2 devices as follows in your xml:

<input type='mouse' bus='usb'>

<address type='usb' bus='0' port='2'/>

</input>

<input type='keyboard' bus='usb'>

<address type='usb' bus='0' port='3'/>

</input>If you can’t get usb devices working for whatever reason (usually due to an outdated qemu version) you can add the VoodooPS2 Kext to your ESP to enable ps2 input. This may limit compatibility with new releases, so make sure to check that you have an alternative before committing.

Use Barrier/Synergy

Synergy and barrier are networked input packages that allow you to control your host and/or guest on the same machine, or remotely. They offer convenient input, but will not work with certain networking configurations. Synergy is paid software, but Barrier is free, and isn’t hard to set up. with one caveat on MacOS. You either want to stick with a version prior to 1.3.6 or install the binary manually like so:

1. Extract the zip file to any location (usually double click will do this) 2. Open Terminal, and cd to the extracted directory (e.g. /Users/my-name/Downloads/extracted-dir/) 3. Copy the binaries to /usr/bin using: sudo cp barrier* /usr/bin 4. Correct the permissions and ownership: sudo chown root:wheel /usr/bin/barrier*; sudo chmod 555 /usr/bin/barrier*

After that, just follow a synergy configuration guide (barrier is just an open source fork of synergy) to set up your merged input. It’s usually as simple as opening the app, setting one as server, entering the network address of the other, and then arranging the virtual merged screens accordingly. Note that if you experience bad performance on your guest with synergy/barrier, you can make the guest the server and pass usb devices as described above, but this will make your input devices unavailable on the host if the VM crashes.

Use a USB Controller and Hardware KVM Switch

Probably the most elegant solution. You need $20-60 in hardware to do it, but it allows switching your inputs without prior configuration or problems if the guest VM crashes. Simply isolate and pass through a usb controller (as you would a gpu in the section above) and plug a usb kvm switch into a port on that controller as well as a usb controller on the host. Plug your keyboard and mouse into the kvm switch, and press the button to switch your inputs from one to the other.

Some USB3 controllers are temperamental and don’t like being passed through, so stick to usb2 or experiment with the ones you have. Typically newer Asmedia and Intel ones work best. If your built-in USB controller has issues it may still be possible to get it working using a 3rd party script, but this will heavily depend on how your kvm switch operates as well. Your best bet is just to buy a PCIe controller if the one you have doesn’t work.

Audio

By default, audio quality isn’t the best on OS X guest VMs. There are a few ways around it, but we suggest a hardware-based approach for the best reliability.

Hardware-Based Audio Passthrough

This method is fairly simple. Just buy a class compliant USB audio interface advertised as working in Mac OS, and plug it into a USB controller that you’ve passed through to the VM as described in the KVM switch section. If you need seamless audio between host and guest systems, we have a guide on how to get that working as well. We regard this option as the best solution if you plan on using both the host and guest system regularly.

HDMI Audio Extraction

If you’re already passing through a GPU, you can just use that as your audio output for the VM. Just use your monitor’s line out, or grab an audio extractor as described in the linked article above.

Pulseaudio/ALSA passthrough

You can pass through your VM audio via the ich9 device to your host systems’ audio server. We have a guide that goes into detail on this process here.

CoreAudio to Jack

CoreAudio supports sending system sound through Jack, a versatile and powerful unix sound system. Jack supports networking, so it’s possible to connect the guest to the host over the network via Jack. Because Jack is fairly complex and this method requires a specific network setup to get it working, we’ll be saving the specifics of it for a future article. On Linux host systems, tools like Carla can make initial Jack setup easier.

Quality of Life

If you find yourself doing a lot of workarounds or want to customize things even further, these are some tools and resources that can make your life easier.

Clover Configurator

This is a tool that automates some aspects of managing clover and your ESP configuration. It can make things like adding kexts and defining hardware details (needed to get iMessage and other things working) easier. It may change your config.plist in a way that reduces compatibility, so be careful if you elect to use it.

InsanelyMac and AMD-OSX

Forums where people discuss hackintosh installation and maintenance. Many things that work in baremetal hackintoshes will work in a VM, so if you’re looking for tweaks that are only relevant to your software configuration, this is a good place to start.

Troubleshooting

As always, first steps when running into issues should be to read through dmesg output on the host after starting the VM and searching for common problems.

No output after passing through my GPU

Make sure you have a compatible version of Mac OS, most Nvidia cards will only work on High Sierra and earlier, and 20 series cards will not work at all. Make sure you don’t have spice or QXL devices attached, and follow the steps in the verification section to make sure that your vfio-pci configuration works. If it doesn’t you may have to load the driver manually, but this isn’t the case on most modern linux distributions.

Make sure that your config.plist and OVMF resolution match your monitor’s native resolution. How to edit these settings is covered in Part 1.

If all else fails, you can try passing a vbios to the card by downloading the relevant files from techpowerup and adding the path to them in your XML, usually something like <rom bar='on' file='/var/lib/libvirt/vbios/vbios.rom'/> in the pci device section that corresponds to the GPU.

Can’t Connect to SMB shares or see other networked devices

Change to a different networking setup as described in the networking section

iMessage/AirDrop/Apple Services not working

You have to configure these just like any other hackintosh. Consult online guides for procedure specifics.

Multiple PCI devices in the same IOMMU group

You need to install the ACS patch. Arch, fedora and Ubuntu all have prepatched kernel repos. Systemd-boot based ubuntu distributions like Pop!OS will need further work to get an installed kernel working. Refer to your distro documentation for exact procedure needed to switch or patch kernels otherwise. You’ll also need to add

2 identical PCI IDs

You’re going to have to add a script that isolates only 1 card early in the boot process. There’s several ways to do this, and our method may not work for you, but this is the methodology we suggest:

open up a text editor as root and and copy/paste this script:

#!/bin/sh # Add one line per pci device, changing the bus ID to match the devices you want passed through. # An for example, my 1070 is in 01 colon 00.1 and 01 colon 00.0 so I would need 2 lines, as below. # You can find your device bus IDs by running lspci -Dnn. use lspci -Dnnd 'deviceid' to list specific devices. # Do not delete the backslashes when changing bus IDs. # Check full ids on those systems by running ls /sys/bus/pci/devices and cross referencing. echo "vfio-pci" > /sys/bus/pci/devices/0000\:01\:00.0/driver_override echo "vfio-pci" > /sys/bus/pci/devices/0000\:01\:00.1/driver_override modprobe -i vfio-pci

Save it as /usr/bin/vfioverride.sh.

from there run these commands as root:

# chmod 755 /usr/bin/vfioverride.sh # chown root:root /usr/bin/vfioverride.sh

On Arch, as root, make a new file called pci-isolate.conf in /etc/modprobe.d, open it in an editor and add the line install /usr/bin/init-top/vfioverride.sh to it. Save it. Make sure modconf is listed in the HOOKS=( array section of your initrd config file, mkinitcpio.conf.

If you’re on fedora or RHEL, you can simply add the install line to install_items+= array and modconf/vfio-pci to the add_drivers+= array.

And update your initial ramdisk using mkinitcpio, dracut, or update-initramfs depending on your distribution (Arch, RHEL/Fedora and *Buntu respectively.)

NOTE: script installation methodology varies from distro to distro. You may have to add initramfs hooks for the script to take effect, or force graphics drivers to load later to prevent the card from being captured before it can be isolated. refer to the Arch Wiki article for a different installation methodology if this one fails. You may also have to add the vfio-pci modules to initramfs hooks if your kernel doesn’t load the vfio-pci module automatically.

Reboot and verify your devices are isolated by checking lspci for them (if they’re missing you’re good to go.)

If not, set vfio-pci to load early with hooks and try again. If it still doesn’t work, you may need to compile a kernel that does not load the module and follow the archwiki guide on traditional setup.

The best preventative measure for this problem is to buy different cards in the first place.

I did everything instructed but the GPU still won’t isolate/VM crashes or hardlocks system on startup

Your Graphics drivers are probably set to load earlier in the boot process than vfio-pci. You can fix this one of 2 ways:

- blacklisting the graphics driver early

- tell your initial ramdisk to load vfio-pci earlier than your graphics drivers

The first option can be achieved by adding amdgpu,radeon or nouveau to module_blacklist= in your kernel command line options (same way you added vfio device IDs in the first section of this tutorial.)

The second is done by adding vfio_pci vfio vfio_iommu_type1 vfio_virqfd to your initramfs early modules list, and removing any graphics drivers set to load at the same time. This process varies depending on your distro.

Onmkinitcpio systems (Arch,) you add these to the MODULES= section of /etc/mkinitcpio.conf and then rebuild your initramfs by running mkinitcpio -P.

On dracut systems (Fedora, RHEL, Centos, Arch in future releases,) you add these to a .conf file in the /etc/modules-load.d/ folder.

Images Courtesy Foxlet, Pixabay

Consider Supporting us on Patreon if you like our work, and if you need help or have questions about any of our articles, you can find us on our Discord. We provide RSS feeds as well as regular updates on Twitter if you want to be the first to know about the next part in this series or other projects we’re working on.